How eFuse Uses Flagsmith for A/B & Multivariate Testing

eFuse is a web and mobile application for the Esports & Video Game industry that helps connect gamers with professional opportunities and esports scholarships. We had the chance to speak with Brian Gorham (LinkedIn), a Sr. Software Engineer at eFuse, on how they have used A/B and Multivariate testing with Flagsmith to learn about and improve the application experience for users.

Setting Up an Experiment

To start with, describe how you do experimentation at eFuse. What tools and processes are you using to accomplish that?

At eFuse our experiments are all documented and tracked in Confluence. Before starting an experiment a design document is created in Confluence which establishes the design of the experiment and the assumptions around why we are running the experiment. Additionally we think through variables that might affect the test e.g. paid users might skew data. Once the design document is complete, we move into execution.

Execution means setting up the test in Flagsmith. For each test, we use 1 flag to control the UX. For the experiment we bucket users into test or control groups in Flagsmith using segments. With the flag and segments set up, we are ready to run the experiment.

95% of our tests are client side - based on React and React Native for mobile. The feature flag puts users down different code paths. For these tests we are generally doing bolder structural changes to the product to test and see what works. In order to know what works, the next important element comes into play, and that’s the analytics.

We have an internal “feature flag” component that controls the top-level component and the child components. That component has an “experiment” flag that if set will push data down to Segment and eventually Mixpanel. [Note - Flagsmith has native integrations with both Segment and Mixpanel that make this simple]. Mixpanel is where the real analysis is done, based on the cohorts created for the test.

Show and Tell

Tell us about the most impactful experiment that you have run?

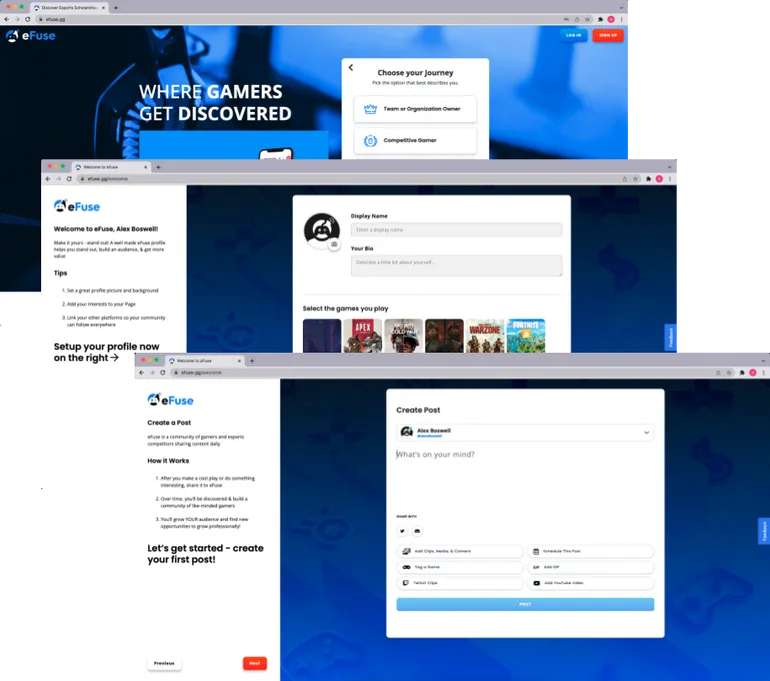

Earlier in 2021 we ran an A/B test in our onboarding process that was really interesting. As described, we started with our hypothesis. It was that a more guided onboarding would be more engaging than users just dropped into the application, and onboarded users would have higher adoption/retention. So we set up the test as described above. A portion of our users were dropped right into the application (this was our control group - Control), and a portion were shown through a four part onboarding that had them set up their profile, follow accounts, share what games they are interested in, and link social accounts (this was the experimental group - V1).

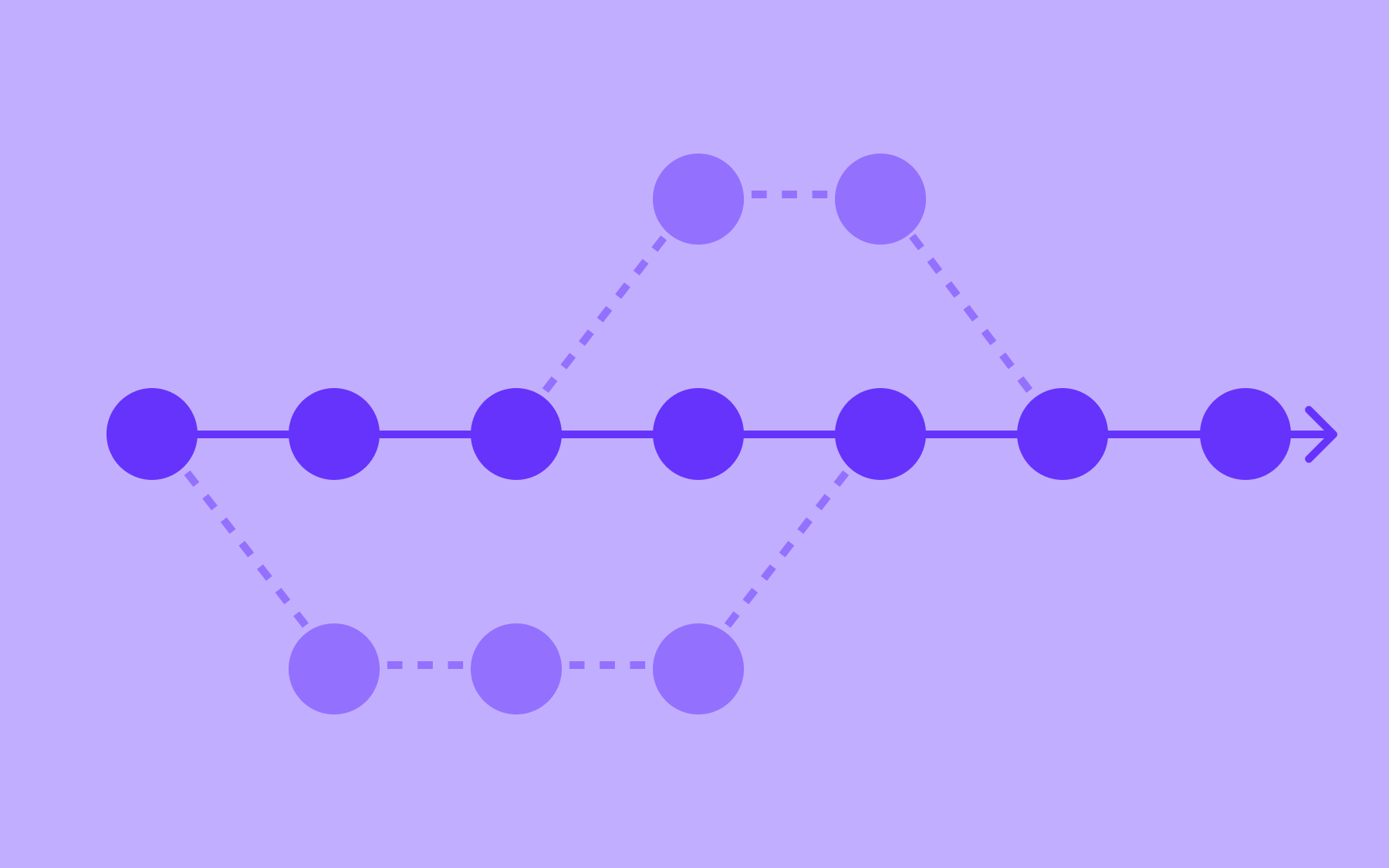

When we ran the test and looked at the data, we found that there wasn’t a significant difference in new user engagement. Instead of giving up, we thought more deeply about whether the onboarding questions or steps were the right ones, or if there were better steps that might increase adoption/retention. We adjusted our approach and ran a new test. This time a multivariate test. We kept the same two groups as above, but added a third group who we also asked to make a post in eFuse as part of the onboarding (a second experimental group - V2).

This time, when we got the data back we found that V2 outperformed Control and V1. We learned that having a user post is the biggest aspect to retention. This was a great find, but we continue to experiment and improve the onboarding process further!

The Road Ahead

What are your goals long term for experimentation at eFuse?

We are building an engineering culture where we build A/B tests in the core feature flagging process. The goal is to have everything that is behind a flag tested as it is launched.

Thank You

Thank you for taking the time to share your story with us Brian!

.webp)

.png)

.png)

.png)

.png)

.png)

.png)